内容纲要

概要描述

使用须知:

- 本实验是在没有开启guardian安全认证的环境中测试的;

- 通过 API 方式来使用Object Store

默认情况下,HBase用相同的方法处理结构化和非结构化的数据。但是,由于图片等非结构化数据非常大,在向HBase导入时会让Region大小增长迅速,频繁触发Region的Split和Compaction,在一定程度上卡住客户端的写入,影响HBase的插入性能。因此,对非结构化的大对象采用不同方式处理,在插入时降低Region的Split和Compaction频率,提高插入性能,Object Store就是为了解决这个问题而设计的。

详细说明

获取Hbase api运行依赖的jar包

-

HBase API的依赖jar包都在如下列表中

jar名称中 * 位置是包的版本号,这些jar的版本号根据您使用的TDH版本的不同会有所不同,以您集群上正在使用的包为准:hbase-client-*-transwarp.jar hbase-bson-*-transwarp.jar hbase-common-*-transwarp.jar hbase-protocol-*-transwarp.jar hadoop-common-*-transwarp.jar hadoop-auth-*-transwarp.jar hadoop-annotations-*-transwarp.jar hadoop-mapreduce-client-core-*-transwarp.jar commons-codec-*.jar commons-io-*.jar commons-lang-*.jar commons-logging-*.jar commons-collections-*.jar guava-*.jar protobuf-java-*.jar netty-*.Final.jar htrace-core-*.jar elasticsearch-*-transwarp.jar jackson-mapper-asl-*.jar findbugs-annotations-*.jar zookeeper-*-transwarp.jar log4j-*.jar commons-configuration-*.jar slf4j-api-*.jar slf4j-log4j12-*.jar jsch-*.jar jzlib-*.jar hbase-hyperbase-*-transwarp.jar -

进入容器内部将jar包使用命令scp获取

-

获取hyperbase服务所在pod

kubectl get pod|grep hyperbase -

进入任意一个该服务pod中,这里以regionserver为例

kubectl exec -it hyperbase-regionserver-hyperbase1-2285948569-0b7cl bash -

取出jar包

scp /usr/lib/hbase/lib/*.jar 172.22.33.1:/mnt/disk3

-

若项目配置了maven仓库为TDH的SDK,可使用如下pom片段 版本号,请参考实际环境中的版本

org.apache.hadoop

hadoop-common

2.7.2-transwarp-5.2.2

org.apache.hbase

hbase-client

0.98.6-transwarp-5.2.2

Object Store API 示例

demo使用注意事项:

conf.set中的hbase.zookeeper.quorum 、 zookeeper.znode.parent 、hbase.zookeeper.property.clientPort改成对应的集群上面的配置,hbase.zookeeper.quorum是集群zookeeper相关配置,后面两个参数没有修改的话使用默认的即可testLOBGet()中的path是图片在windows上保存的位置

参考demo:

package transwarp;

import static org.junit.Assert.assertTrue;

import java.io.File;

import java.io.FileInputStream;

import java.io.IOException;

import java.util.concurrent.TimeUnit;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.*;

import org.apache.hadoop.hbase.client.Get;

import org.apache.hadoop.hbase.client.HTable;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.client.Result;

import org.apache.hadoop.hbase.protobuf.generated.HyperbaseProtos;

import org.apache.hadoop.hbase.util.Bytes;

import org.apache.hadoop.hyperbase.client.HyperbaseAdmin;

import org.apache.hadoop.hyperbase.metadata.HyperbaseMetadata;

import org.apache.hadoop.hyperbase.secondaryindex.IndexedColumn;

import org.apache.hadoop.hyperbase.secondaryindex.LOBIndex;

public class Test {

protected static HyperbaseAdmin admin = null;

protected static Configuration conf = null;

static {

conf = HBaseConfiguration.create();

//改成对应的集群上面的配置!

conf.set("hbase.zookeeper.quorum","mll01,mll02,mll03");

conf.set("zookeeper.znode.parent", "/hyperbase1");

conf.set("hbase.zookeeper.property.clientPort", "2181");

try {

admin = new HyperbaseAdmin(conf);

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

public static void main(String[] args) throws Exception {

testLOBGet();

}

public static void testLOBGet() throws Exception {

byte[] row = Bytes.toBytes("rowkey01");

byte[] tableName = Bytes

.toBytes("SIMPLE_TEST_PUT_SCAN" + System.nanoTime());

byte[] indexName = Bytes.toBytes("IDX");

byte[] family1 = Bytes.toBytes("f1");

byte[] family2 = Bytes.toBytes("f2");

String path = "D:\\work\\java\\objectstore\\test\\980f51924e9d409d2949cef6ea47d324.jpg";

createTable(TableName.valueOf(tableName), family1, family2);

addLOB(tableName, family1, indexName);

byte[] value01 = getFileBytes(path);

HTable htable = new HTable(conf, tableName);

Put put = new Put(row);

put.add(family1, Bytes.toBytes("q1"), value01);

htable.put(put);

htable.flushCommits();

TimeUnit.SECONDS.sleep(1);

Get get = new Get(row);

Result rs = htable.get(get);

CellScanner cs = rs.cellScanner();

while(cs.advance()){

assertTrue(Bytes.equals(CellUtil.cloneValue(cs.current()), value01));

System.out.println(Bytes.toString(CellUtil.cloneValue(cs.current())));

}

htable.close();

// admin.deleteTable(TableName.valueOf(tableName));

}

public static byte[] getFileBytes(String path) throws IOException {

FileInputStream fis = new FileInputStream(new File(path));// 新建一个FileInputStream对象

byte[] b = new byte[fis.available()];// 新建一个字节数组

fis.read(b);// 将文件中的内容读取到字节数组中

fis.close();

return b;

}

public static void createTable(TableName tableName, byte[]... families) throws Exception {

HTableDescriptor tableDescriptor = new HTableDescriptor(tableName);

for (byte[] family : families) {

tableDescriptor.addFamily(new HColumnDescriptor(family));

}

// 注意,object store一定要预分配region,每个region最好不要超过500G的数据。

byte[][] splitKeys = new byte[100][];

for (int i = 0; i < 100; i++) {

splitKeys[i] = Bytes.toBytes("rowkey" + i);

}

// create table succ

admin.createTable(tableDescriptor, null, splitKeys);

HyperbaseMetadata metadata = admin.getTableMetadata(tableName);

assertTrue(metadata != null);

// check metadata

assertTrue(metadata.getFulltextMetadata() == null);

assertTrue(metadata.getGlobalIndexes().isEmpty());

assertTrue(metadata.getLocalIndexes().isEmpty());

assertTrue(metadata.getLobs().isEmpty());

assertTrue(metadata.isTransactionTable() == false);

}

public static void addLOB(byte[] tableName, byte[] family, byte[] LOBFamily) throws

IOException {

HyperbaseProtos.SecondaryIndex.Builder LOBBuilder = HyperbaseProtos.SecondaryIndex

.newBuilder();

LOBBuilder.setClassName(LOBIndex.class.getName());

LOBBuilder.setUpdate(true);

LOBBuilder.setDcop(true);

IndexedColumn column = new IndexedColumn(family, Bytes.toBytes("q1"));

LOBBuilder.addColumns(column.toPb());

admin.addLob(TableName.valueOf(tableName), new LOBIndex(LOBBuilder.build()), LOBFamily, false, 2);// 这里的'2'表示在hdfs上创建2个副本,在生产环境中请务必确保该参数大于1!

}

}-

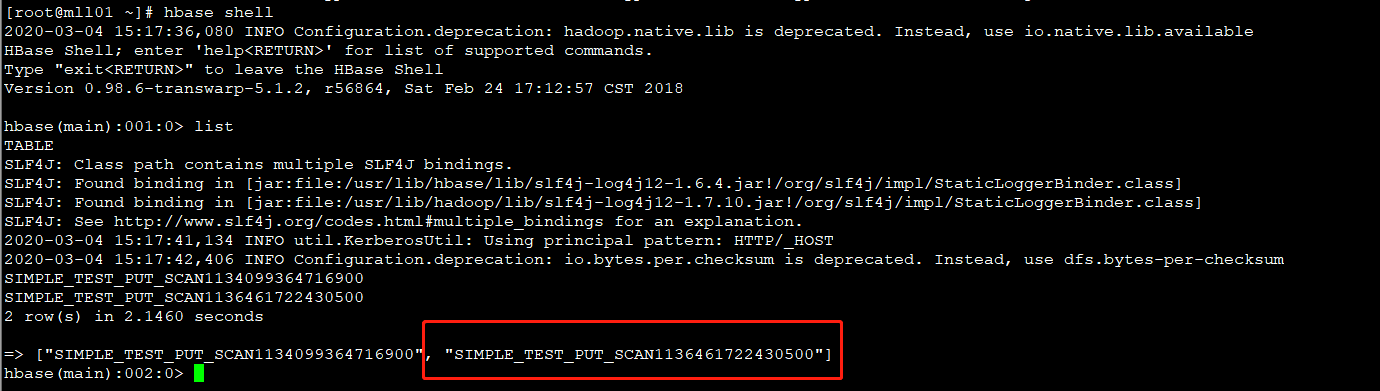

通过上述代码将图片已经保存在对应的hbase表中

进入hbase shell 查看生成的hbase表:[root@mll01 ~]# hbase shell 2020-03-04 15:17:36,080 INFO Configuration.deprecation: hadoop.native.lib is deprecated. Instead, use io.native.lib.available .... hbase(main):001:0> list TABLE SLF4J: Class path contains multiple SLF4J bindings. ... => ["SIMPLE_TEST_PUT_SCAN1134099364716900", "SIMPLE_TEST_PUT_SCAN1136461722430500"]

-

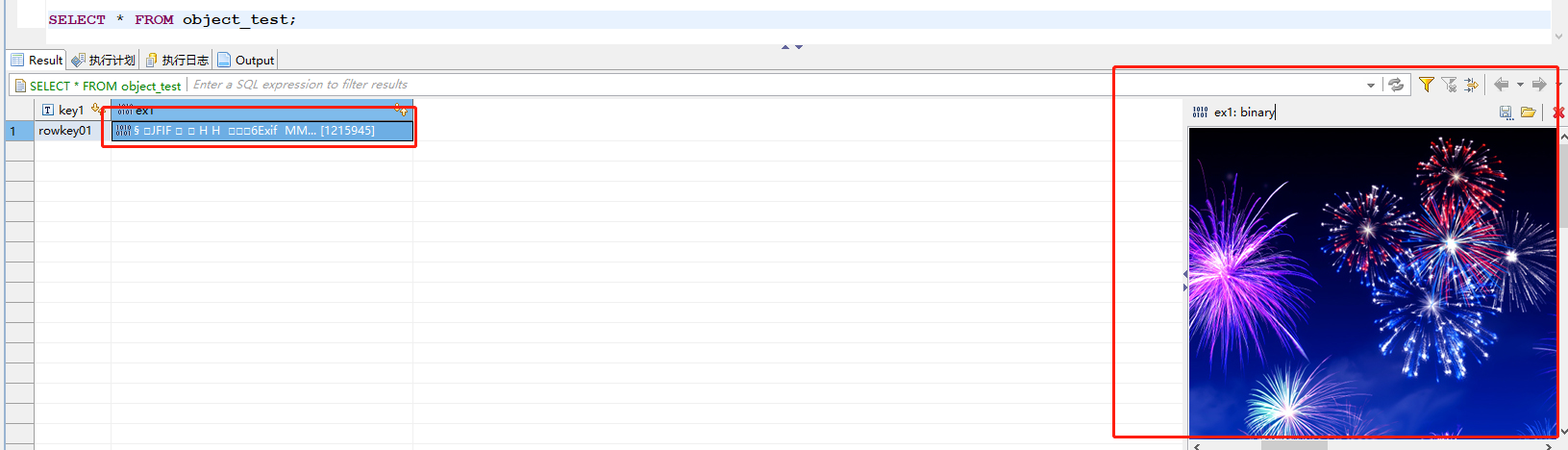

在inceptor中创建hbase外表

注意:hbase.columns.mapping是定义映射表和HBase表列之间的映射关系;hbase.table.name是定义映射表对应的HBase表的表名CREATE EXTERNAL TABLE object_test( key1 string, ex1 BLOB ) STORED BY 'org.apache.hadoop.hive.hbase.HBaseStorageHandler' WITH SERDEPROPERTIES ("hbase.columns.mapping"=":key,f1:q1") TBLPROPERTIES ("hbase.table.name"="SIMPLE_TEST_PUT_SCAN1136461722430500");

-

在inceptor中查询保存的图片

SELECT * FROM object_test;